Networking meets Agile Deployment

You know the feeling when you get a new idea and would like to start building right away, and then run into road block of getting IP addresses allocated for a new VPC. But unless you get CIDR from the network team, it is likely you have to tear down everything and start from scratch later when you want to connect with other services or internal networks. Or simply answering the question “how many IPs do you need?” isn’t possible because you are still evaluating different architecture options. Would it be possible to get best of the both worlds? Start independently, without risking future connectivity.

Edit 26/7/2021; The Original post had primary and secondary CIDRs reversed. That would not have worked because the restrictions on secondary CIDR ranges of VPC. CIRDs are now corrected in text and diagrams. I guess this was just a proof how easy it is to get these wrong and emphasises the importance of network design.

Edit 6/8/2022; I published a Cloudformation template to implement VPCs similar to ideas decribed in this post.

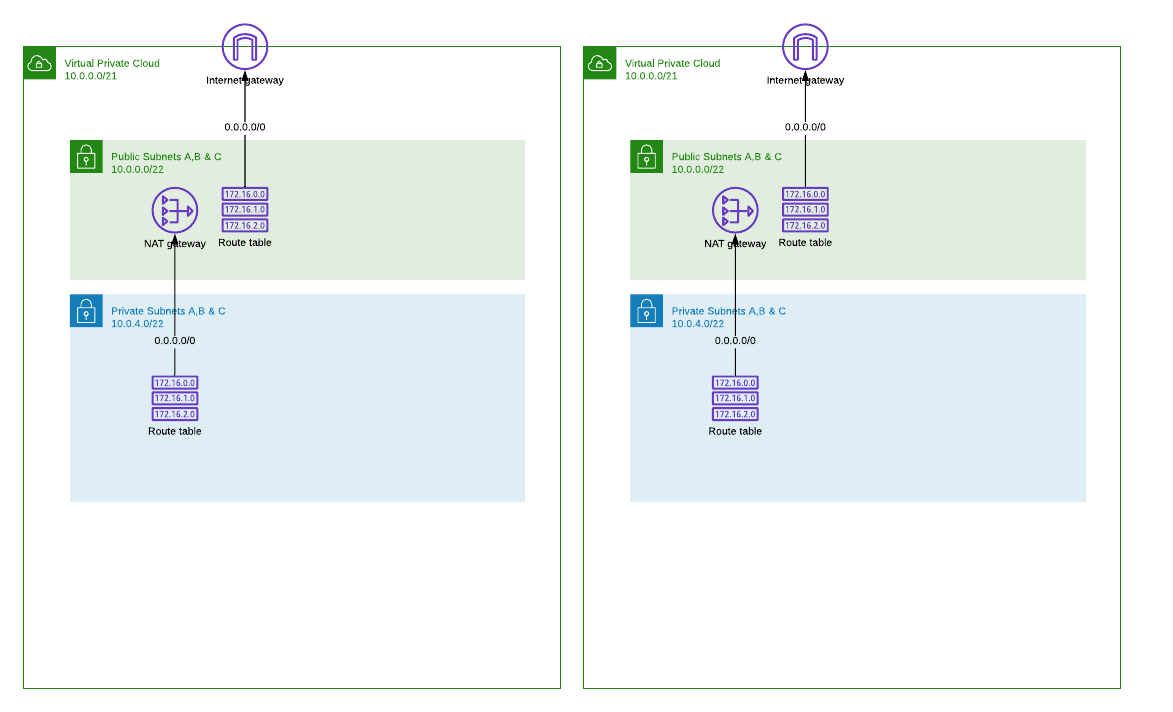

Isolated VPC

Starting point is completely isolated VPC with public and private subnet layers. For readability I haven’t drawn here separate subnets for each availability zone, VPC endpoints or other details.

Important detail here is, both (=all) VPCs are using the same CIDR range (e.g. 10.0.0.0/21). This has some nice benefits and one serious limitation that I’m going to address later.

-

As developer you can create VPCs when needed without a depedency for network management team to allocate unique address space for you. It is also easy to allocate large enough segment to ensure all future plans will fit in.

-

As network manager you don’t have to worry about running out of routable CIDR ranges or argue with developers how many addresses they can get or really need for their project.

-

But traffic can not be routed between VPCs with overlapping CIDR ranges :-(

NOTE: Static primary CIDR must be selected carefully to ensure you can later expand VPC with secondary CIDR and add routable subnet layer. In this example I have used a slice of 10.0.0.0/8 that is shared address space for private networks. Please read the VPC CIDR restrictions before choosing the primary CIDR as this would be very difficult to change later.

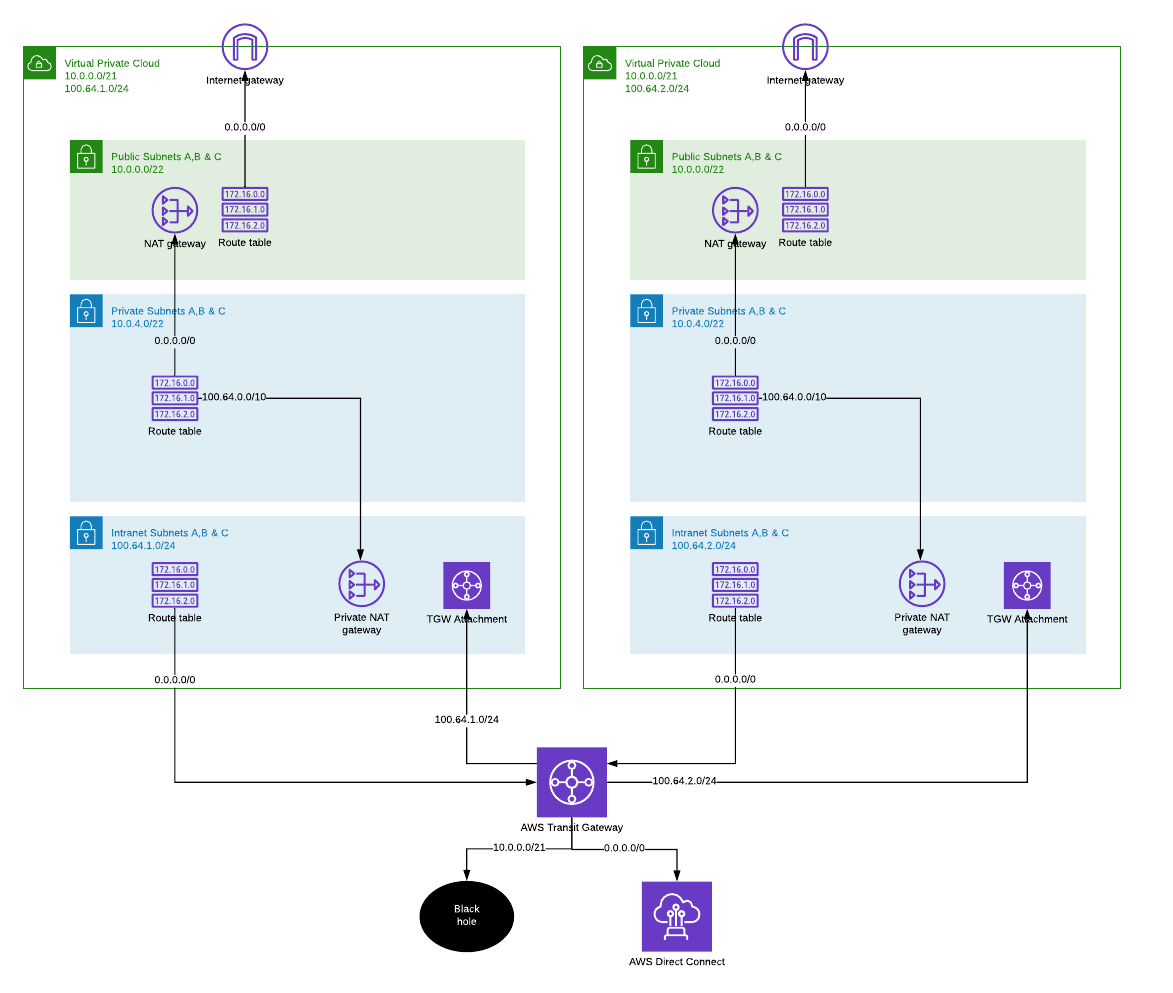

Connecting to intranet

Sooner or later, projects will need to get connected with other services via internal networks. But as we have allocated the same CIDR range for every VPC, we must first do some changes.

-

Expand VPC with an unique secondary CIDR range. To change VPC primary CIDR range, you would have to recreate the VPC, but that is not possible when there are already services built into VPC. Fortunately it is possible to add secondary CIDRs to VPC. Now the network admin will allocate an unique CIDR for “intranet” subnet layer. Because services were build into private subnets, the intranet layer would need to host only the frontend components that are visible other internal services and users. This means we can settle for much smaller CIDR!

-

Create ‘blackhole’ route for VPC primary CIDR in Transit Gateway route table. This is to hide shared CIDR being advertised by any of connected VPCs. All packets to 10.0.0.0/21 will be dropped at Transit gateway.

-

Attach Transit Gateway to VPC intranet layer.

-

Deploy private NAT gateway. NAT in intranet layer will forward traffic from private subnets to other VPCs and on-prem networks via Transit Gateway. In this example I’ve assumed my internal networks are using 100.64.0.0/10.

Now VPCs can see reach others intranet subnets from private and intranet layers and access on-prem networks over Direct Connect attached to Transit Gateway. Public subnets can not reach internal networks beyond their own VPC. It is also possible to add network access control lists (NACL) to prevent connections from public subnets to local intranet subnets. By default all subnets in single VPC have local routes.

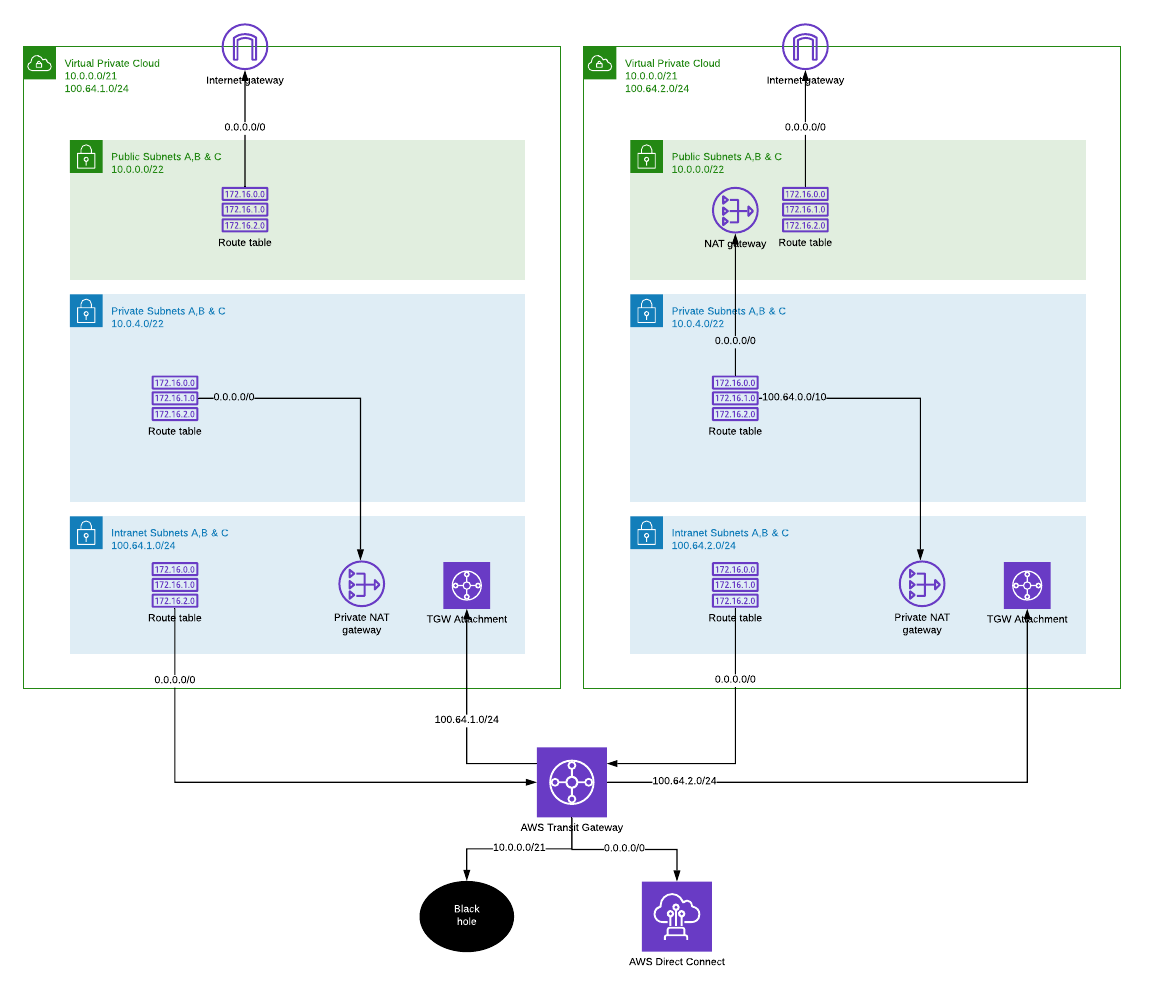

Changing the default route

There needs to be a default route to internet either via VPC NAT and Internet gateway or VPC Private NAT towards internal networks from where packets can be routed out to internet through egress filtering or similar.

On the left, private routing table has been modified to route all non-local traffic to private NAT gateway in intranet subnet. And from there on, packets are send to shared outbound VPC, optionally through packet inspection and egress filtering.

In this configuration it is still possible to expose services to internet directly from VPC public subnets through internet gateway. If this is not desirable, we should consider removing the public subnet layer.

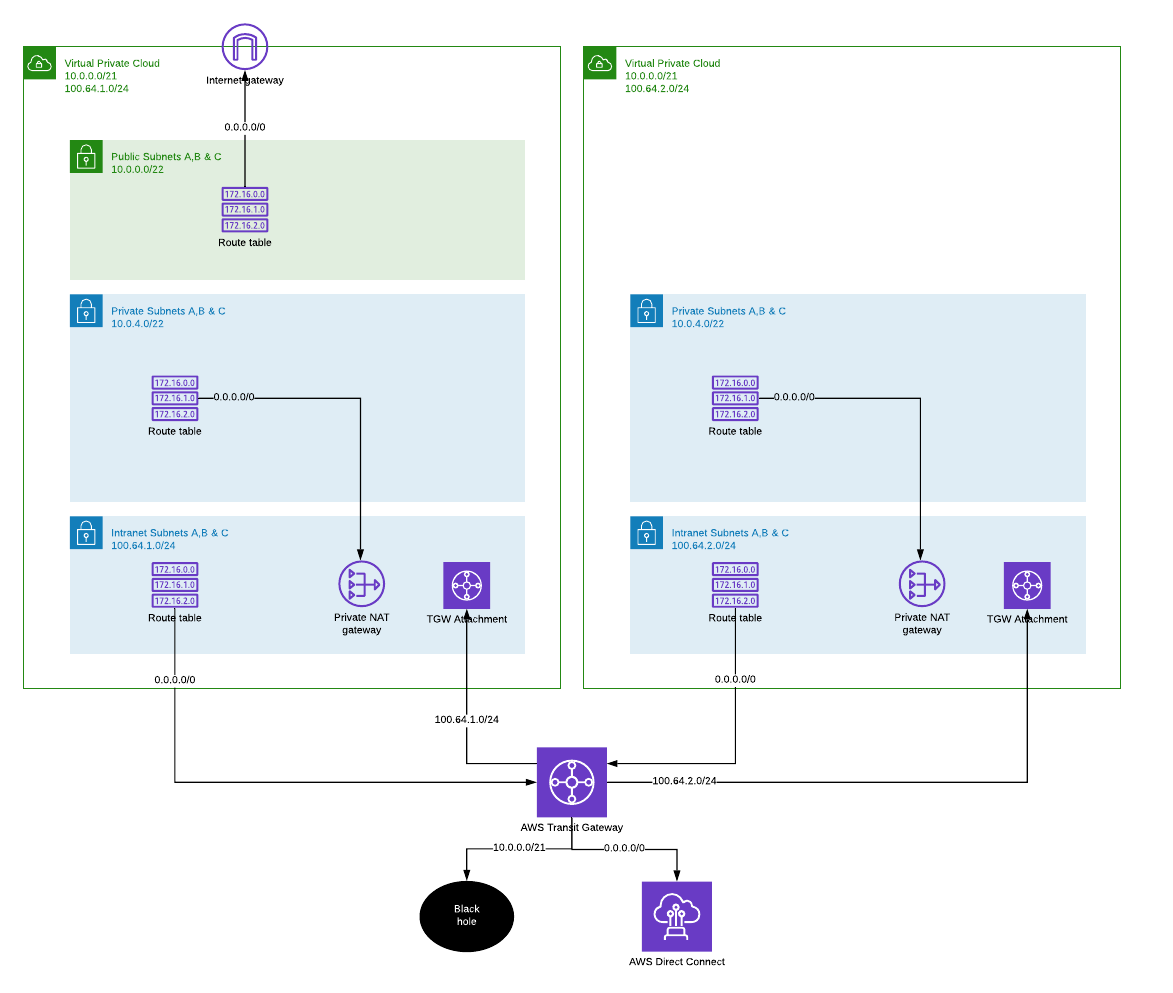

Removing the local internet access

Once the internet access has been established via intranet subnets and private NAT gateways, it is possible to remove internet gateway and public subnets completely from original VPC design. This would allow centralized filtering and traffic inspection also for inbound connections.

On the right, public subnets have been removed and all traffic is routed to Transit Gateway. All services exposed from the VPC are visible only to internal networks. If public services are going to be hosted on this VPC, there must be some kind of a proxy layer build on VPC with internet gateway. This would allow centralized control point for inbound connections, but also limit the development teams control over configuration details.

One size doesn’t have fit all. VPCs can have different network architecture, even though both began with the same design.

Summary

It is possible to allow developers to build their own VPC networks in a way that doesn’t prevent connecting them later to internal networks and other VPCs. Above example started with 2 isolated VPCs and then modified (but not rebuild) VPCs to meet 2 different use-cases where connectivity with other resources and to the internet was required. And while doing that, saved a lot of precious IP address space, yet allowing headroom in subnets to meet future needs.

P.S. How can I connect to things build into private subnets if they are behind NAT from both internet and intranet direction? Do I need to build internal bastions or what?

Friends don’t let friends to build more bastions. You can connect to EC2 instaces with SSM Session Manager or if you need to get into private subnets directly, e.g. to connect your db client to RDS, you can build a temporary tunnel into VPC. All this without managing EC2 bastions and using AWS IAM for authorization!

P.P.S. After publishing this post I found Advanced Architectures with AWS Transit Gateway from March 2020 that is highly recommended if you are working with TGWs. Starting from 36:30 Tom Adamski presents the similar architecture as I did, but emphasizing the ipv4 preservation point of view.